Many years ago, I was sending high altitude balloons to the sky with cameras attached. I wanted them to broadcast these images live to the ground. The solution I used was analog SSTV which ham with good antennas could receive. I also experimented with digital images over APRS. The idea was to decentralize the reception with numerous stations already operating and bridged to the internet.

In the past year there is a boom of local hams using Meshtastic, which is based on long range lora communication and packet relaying. I wondered if I could revisit this project using the new technology.

TL;DR it’s not that easy

Using AI

I’ve asked Claude Code to build such a framework. He suggested to use webp instead of jpeg as it has better compression ratio. Sounded like a good idea and a python simulation was ready in no time. But as it turns out, miss delivery of one packed can ruin the rest of the image as later packets depends on previous ones (I’m not an expert on webp, so I might be wrong).

Back to good old jpeg. I’ve asked Claude to port the SSDV project I’ve used before to pure python. It spent some time (and a lot of credits) but managed to pull it off. It wasn’t pretty but it worked. The code used many new libraries and was even compacted into an object-oriented class, but the roots of low-level C were evident. After a few tweaks and modifications, I was happy. In some places I just changed the high-level application code and let Claude make the adjustments to the library. I found that method of work very easy and enjoyable.

When the code looked decent enough it was time to move to the real world and connect to Meshtastic. Once again, I was impressed with the ai finding a suitable library that connects with both serial and Bluetooth, and it also made the modifications needed to glue all the pieces together, like adjusting packet size and adding delays to not over flood the network. I was ready to experiment, and the results looked horrible.

Stress testing the network

So I needed to dig dipper into Meshtastic, which I know very little about.

Meshtastic is an open‑source firmware that creates a self‑healing LoRa‑based mesh network for simple messaging and data relaying. LoRa is a long‑range, ultra‑low‑bitrate (usually around 0.3 to 5 kbps, typically about 1kbps) radio modulation designed for small data packets over many kilometers.

While there is a separation to channels and app ports, all information is transmitted on the same frequency in Chirp Spread Spectrum modulation. App port function more as “packet type” header, and channels are logically filtered in sofware.

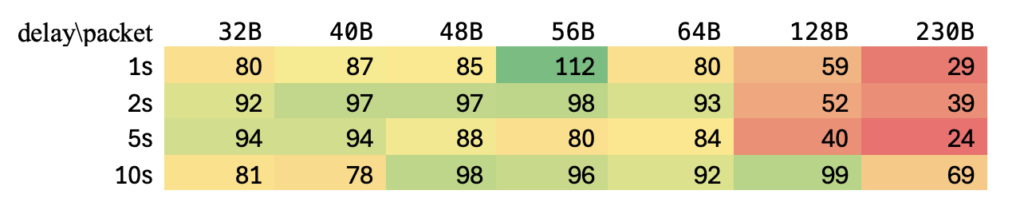

I started testing the system with transmitting varying packets sizes and delays between them.

While network congestion does not make a big difference, as Meshtastic do have a Channel Activity Detection, it seemed packet size does matter.

I found that while the protocol allows for up to 233 bytes of data, in reality, there is a big chance of packets not going thru. This is a bit simplistic as I did not take into effect retransmission of packets from neighboring nodes, but my two radios were a few meters away from each other, so I think we’re ok. Also, even at smaller packets, we can expect about 20% loss.

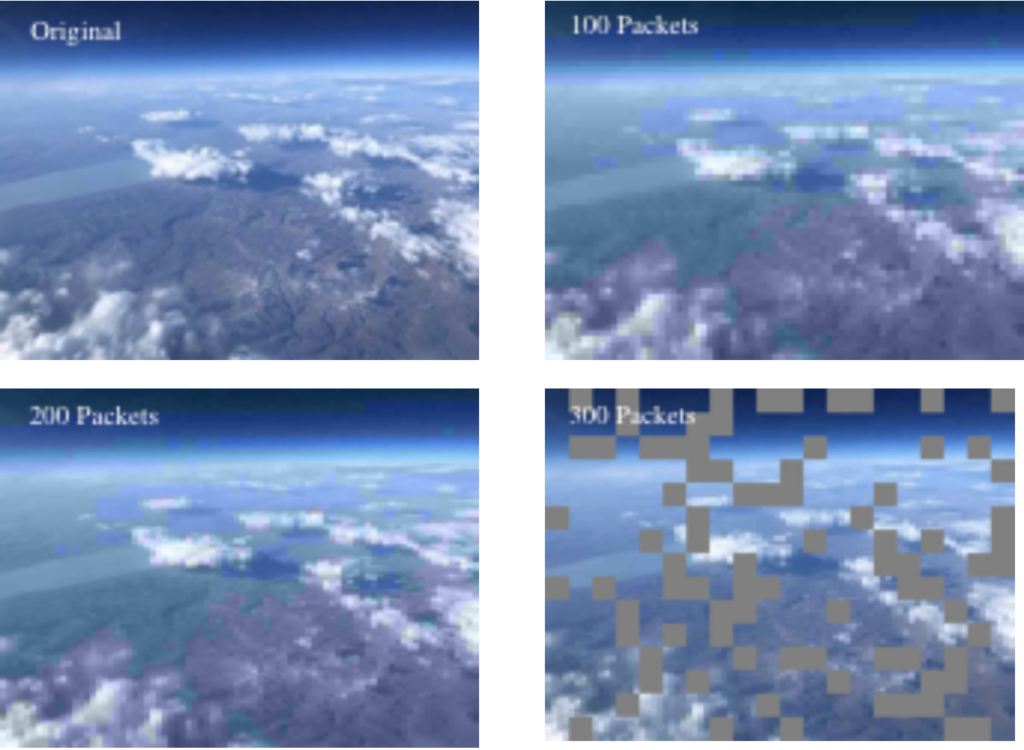

I opted to 48 bytes packets and went back to the SSDV code. I removed every bit I could to reduce overhead and make packets as small as possible and allow for a smaller number of transmissions. But still at 20% packet loss on a small image, it looks unpleasant to the eye. SSDV does contain forward-error-correction, but it’s used to mend badly received packets. Lora drops these packets before we have a change to correct them, so any FEC method will have to be in the inter-packet realm.

Building a working solution from ground up

I went back to the drawing board and ask every ai agent I know to come back with a plan. Some ideas we unrealistic. Some looked interesting at first look but turned out to be not practical. One of those ideas that came back from various agents was layering the resolution so at least we get a low-res image. It’s sounds right but we cannot expect to receive the first packets so we cannot have any inter-packet dependency. Other ideas were science fiction all together like building a image bank based of aerial photography of land, sea, etc and sending weights accordingly.

In the end it was co-pilot who came with a reasonable proposal to the following prompt

lets devise an image compression method that will be used over an unreliable packet network. the image size is 320×240, payload size should be 48 bytes + header. the network handles packet validity so no CRC is needed. whole image should be 100-200 packets. assume 25% packet loss. so no inter-packet dependency. it is possible add redundancy to recover packet loss

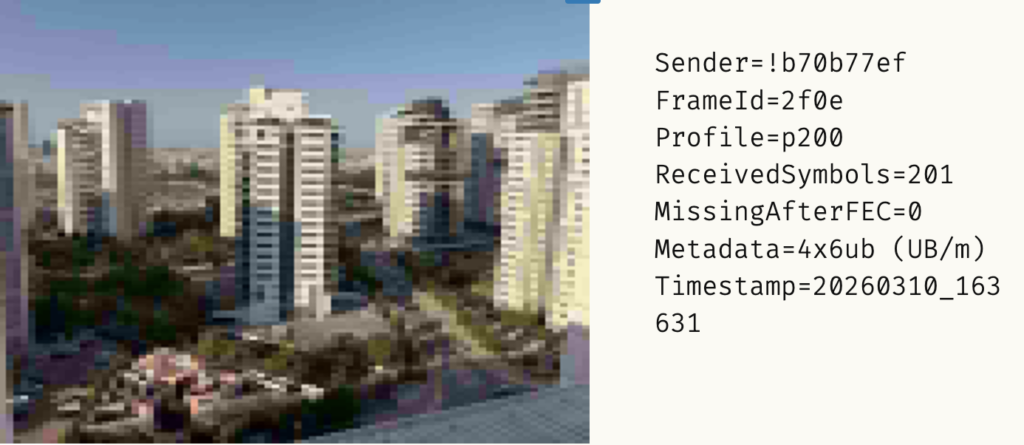

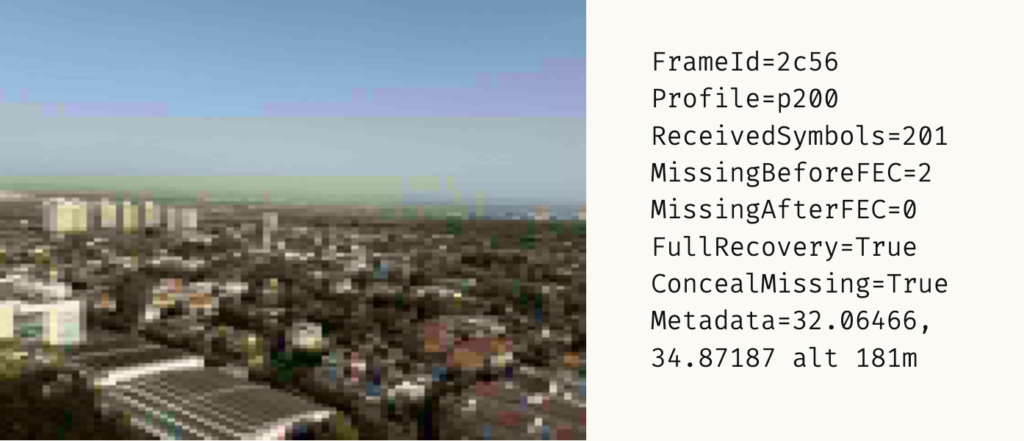

And it worked! We experimented with the number of packets. Allowing for color and FEC we found a sweet spot at 200 data + 100 repair packets. Bellow that the image was too blur, more than that made little improvements.

We’ve also implementing automatic missing-tile concealment (nearest-tile fill) so even when FEC can’t fully recover a tile, the final image won’t have gray holes.

Next I setup a base node receiver at home and took the transmitter on the road.

Final thoughts

I’ve noticed a very different programing style between agents. Copilot creates a very robust code with fault checks and advanced language features. But it also over complicated and hard to understand at times. Claude creates lighter code that I felt is easier to follow and maintain.

This is definitely pushing a square peg into a round hole kind of a project. The Meshtastic network was not designed for such an application, and I’m sure it’s developers would have nightmares if they knew what I tried to do. Also, the image size and quality along with the long transmission time makes this almost impossible to have a practical everyday use. But for me, making a system do something it was not supposed to be doing is a very interesting and valuable lesson.

If you’d like to explore the code, experiment with your own Meshtastic setup, or contribute improvements, you can find the full project on GitHub: https://github.com/idoroseman/MeshCam. I’m always happy to hear from fellow tinkerers and radio geeks, so feel free to share your thoughts and results.

Leave a Reply to ido Cancel reply