As a young engineer I was writing image processing real-time embedded software for a living. we used the DSP chips for all they could offer, and used to rewrote parts of the algorithms in assembly so we can shave off a few cycles per pixel and squese out a bit more of proccessor power.

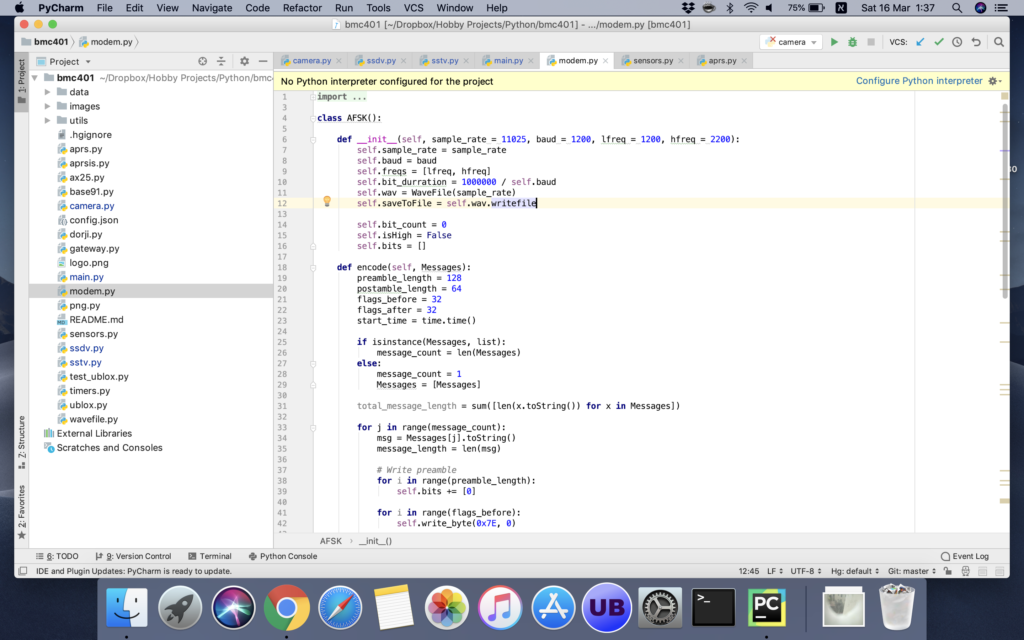

recently i’ve been writeing a piece of software for my High Altitude Balloon. the original code was written in C and bash, but was hard to manage. so for this release I used python as the programming language. for most used this is a great choise. it’s robust, easy to write and debug, and a pleassure to use it’s flexible data stractures.

while python it not concidered a “fast” language due to the use of an interpreter, it was quite all right for most of my not-so-ergent needs.

but then I got a piece of code that converts a data stream to audio file that is transmitted back to ground. when I needed to send a large image file, this modem took several minutes to do the jobs. this was not ok. so I started to dig into the code to see what’s going on.

it took a simple profiling to find the culprit. I wasn’t surprised to find out it was this function

def make_and_write_freq(self, High):

# write 1 bit, which will be several values from the sine wave table

if High:

step = (512 * self.hfreq << 7) / (self.cycles_per_bit * self.baud)

else:

step = (512 * self.lfreq << 7) / (self.cycles_per_bit * self.baud)

for i in range(self.cycles_per_bit):

# fwrite( & (_sine_table[(phase >> 7) & 0x1FF]), 1, 1, f);

v = _sine_table[(self.phase >> 7) & 0x1FF] * 0x80 - 0x4000

if High and self.APRS_Preemphasis:

v *= 0.65

else:

v *= 1.3

# int16_t v = _sine_table[(phase >> 7) & 0x1FF] * 0x100 - 0x8000;

v = int(v)

self.buffer += [ (v & 0xff)

so my first suspect was the division in the step calculation. while your average laptop has a strong processor, some smaller embedded lack the math coproccessor that help on those hard to do calculations. python also lacks a way to define constants, so it’s hard for the optimiser to guess it doesn’t need to repeat the costly division.

preforming the calculation for both values of High only once did shortened the time from 400 seconds to around 300. incoraged by this i’ve moved on.

searching the net I found a few general tips for speeding up python. for convinience i switched back to my macbook pro. the base line was 4.5 seconds.

the first thing I tried is to avoid function calls and string concatenation, so i’ve prepared all the bits beforehand in an array and passed it in one shot into the function. it did cut time to arround 3.7 seconds.

the greatest improvment was by switching everything to local variables. it turns out globals and class members are time expensive. doing all the math on a local buffer and only then pasting it into the self.buffer had an impressive impact and cut time by another second.

i’ve tried commenting out lines one at a time and see what impact it had on running time. while the result wasn’t valid it did show me there’s no single opperation that is consuming too much time.

a surprising fact was with respect of the use of sinus table. the original code was written by Dave Akerman for the HABDUINO. its was designed for the arduino AVR which lack any floating point capabilities. so the use of Look Up Table speeds things up. on the Rasoberry Pi Python it seems the use of math.sin() is not as bad.

i’ve moved back to the raspberry pi. processing time was still in the hundreds of seconds. the last thing I’ve done is reducing the audio sampling rate. there’s no need to calculate CD quality of 44K samples per seconds if your sending it through narrow band FM channel. it cuts down time to around a minute, which is long but acceptable.

maybe i’ll try to go back to the C implemention and wrap it as a python package, but for now it will have to do.

Leave a Reply